How AI Encodes Meaning: A Business Owner’s Lever for Influencing Content

How AI Encodes Meaning: A Business Owner’s Lever for Influencing Content

Why Does AI Output Feel So Generic?

You know that strange, almost hollow feeling when you read something the AI wrote for you? It sounds right, the words are spelled correctly, but the message isn’t… yours. When the output feels generic, even disconnected from your real intent, it’s hard not to get frustrated or wonder if you’re losing control of your own story. It’s honestly wild—attribution methods in AI align strongly with how our brains process language, even outperforming other techniques for predicting what lights up early language-processing areas.

I remember how surprised I was when I first started exploring how AI encodes meaning, realizing that machines could actually capture context and nuance—and not just shuffle cold data.

If you’ve been skeptical or just plain confused about where your real voice goes when AI gets involved, you’re not alone. Plenty of professionals I know (myself included, not that long ago) approach these tools thinking, “Sure, they’ll spit out something, but it’s going to be bland.” And when the draft you get back barely resembles the nuanced point you meant, it feels kind of personal—like technology is quietly sidestepping your expertise. If you’ve ever caught yourself fixing page after page and wondering why you even bothered with AI in the first place… yeah, I’ve been there too.

But here’s what finally clicked for me. Words aren’t magic.

Meaning Is Always Encoded

No word has universal truth. There’s nothing magic about “love” or “team” or even “report.” They’re just arbitrary symbols we all agreed on. At its core, language is a code. We swap squiggles and sounds and trust each other to read between the lines.

Think about the intent behind saying “Hello,” “Bonjour,” or waving your hand. All three do the same basic job. A wave and the word “hola” represent the same intent—just different encodings.

I’ll admit, this part tripped me up. Words seemed too abstract, too human. I used to think meaning was something special, tucked inside the letters themselves. It was unsettling to realize how much we rely on symbols to carry intent from one person to another.

There’s something freeing about realizing meaning lives underneath the surface. Six months ago I was still caught up in the idea that the words themselves carried some mystical charge. Once you see that meaning sits beneath the squiggles, you start to guide how your intent travels. No matter what language, tool, or AI you’re using.

Understanding encoding isn’t a technical hurdle. It’s a lever. When you know which levers you’re pulling, you get more choice, more voice, and more influence, even when a machine is helping you write. And honestly, that’s where the real power starts to show up.

How AI Encodes Meaning: Actually Encoding Your Words

Just like how we encode intent into words, AI encodes words into tokens and then embeddings, where meaning actually gets captured. Humans crave nuance and adapt context with every conversation, but large language models push for ruthless compression, optimizing for efficiency even if the richness thins out arxiv.org. In other words, our language is always taking creative shortcuts. Not that different from how AI shaves meaning into something the system can handle.

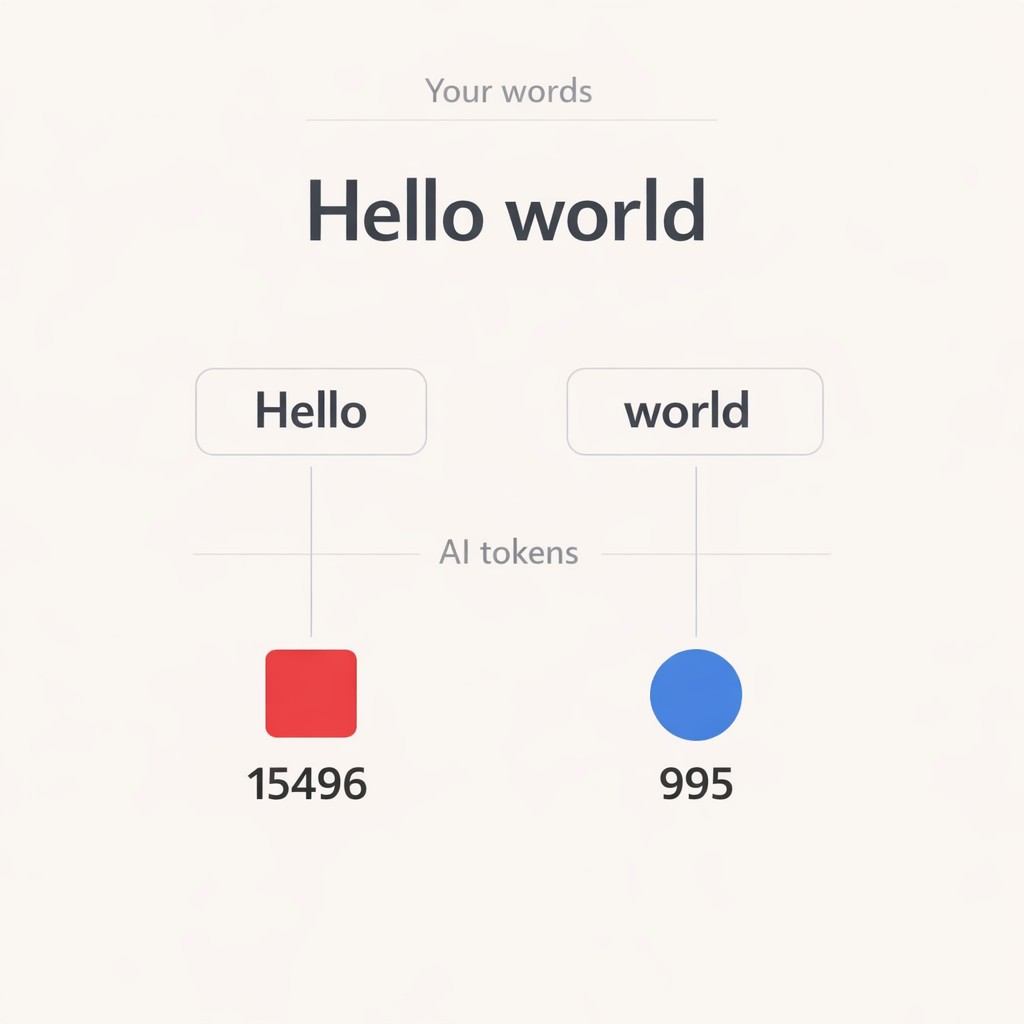

The first time I tried OpenAI’s tokenizer tool, understanding AI tokenization became real for me. I didn’t know what to expect. I dropped in a sentence and watched as it snapped apart—not at every word, but at oddly specific spots. Spaces, punctuation marks, fragments of words I thought belonged together. Seeing “Hello world” split into two tokens that included the space on one side made it weirdly clear: AI isn’t reading text the way we do. It’s mapping patterns in chunks, not full sentences. The frequency with which common words showed up as single tokens, and unusual ones got broken down further, started to reveal a logic I hadn’t anticipated. There was a quiet thrill in noticing how “and,” “the,” “cat” all held their own neat places, while a technical term fragmented into several pieces.

This wasn’t magic. It was systematic.

For instance, when you type “Hello world” into a tokenizer, you get [“Hello”, ” world”] as tokens. Then those become numbers, like [15496, 995]. “The cat” becomes [“The”, ” cat”] and their numerical representations. Using AI meaning representation, the model transforms these tokens and numbers into internal states that allow it to recognize and reconstruct context from the encoded information. Suddenly, the invisible process feels tangible. You can actually see the layers that shape what the AI “remembers.”

Where things got interesting—okay, frustrating—was bumping into token limits for the first time. There’s only so much the model can process at once. A 1000-word essay might run into a cold wall if it generates too many tokens. I hadn’t realized how these ceilings would impact what the AI could keep track of. Hitting that boundary shifted my view of the whole system. It’s not just about asking smarter questions, but also understanding the actual bandwidth you’re negotiating with.

Sometimes what you want to say literally runs out of space in the machine’s world. That limitation isn’t a deal-breaker. It’s a boundary you can play within, once you know it’s there.

At one point, I got obsessed with whether spaces at the end of a sentence mattered to the tokenizer. I spent a weird hour feeding in variations—“Good morning”, “Good morning “, “Good morning”—basically just arguing with myself over whether the extra space changed the outcome. It was oddly satisfying to see how a stray space could break things up and shift token counts. That didn’t solve anything, but it felt like a little window into the machine’s logic, and now I notice those details way more than I used to.

Framing AI as a System—Not a Black Box

Let’s pause on that old idea that AI is just a mysterious black box. It isn’t. What’s actually happening is systematic. More like a locked toolbox than a magic trick. Once you start seeing it this way, the fog lifts fast.

Here’s the edge it gives you. When you know your message gets encoded step by step, you can start steering. Instead of throwing wishes into an algorithm and crossing your fingers, you map how your meaning travels. So your prompts shape the AI’s response in ways that actually fit your goals. It’s not guesswork.

The power comes from understanding that framing cuts down the back-and-forth cycle which stabilizes iteration and pushes the output closer to what you truly meant.

Of course, that all sounds good until you hit the real world and start worrying, “Will I need to learn a bunch of technical stuff?” If the terms “tokenization” or “embeddings” sound intimidating, I get it. I felt the same way at first. Like I was missing a prerequisite. The good news? You don’t have to master the machinery. You just need enough to guide the outcomes.

Before, I’d just hand the AI a vague prompt and hope it caught my drift. Like, “summarize our Q4 strategy.” Nine times out of ten, the response read like an intern’s first draft. Factual, safe, and totally missing my point about why the strategy even mattered. That’s when I started mapping the non-negotiable parts of my message—the context, the intended tone, the actual stakes—right into the prompt. Back in that earlier section where a space could break a token apart, I found myself treating my words almost like puzzle pieces, making sure each part fit together in a way the model could actually handle. So I’d say: “Summarize our Q4 strategy, but highlight that customer trust is the north star, and explain how the global market uncertainty directly shaped our approach. Use examples specific to healthcare, and make sure it feels honest, not corporate.” The difference was real. The AI’s summary finally echoed the values and expertise I needed to convey. Not because the system suddenly ‘understood’ me, but because I was intentionally encoding meaning with each direction I gave.

Instead of hoping for nuance, I structured it. The whole process became less frustrating. Instead of feeling boxed in by blandness, I started getting outputs that belonged to me—not just to the machine. By shifting prompts and being specific, I was able to decode AI content and ensure that the generated responses reflected my intent and context much more accurately.

Making Your Meaning Stick: Practical Steps for Lasting Control

You’re not at the mercy of the machine. Control is possible. Even if the process felt murky at first, you can absolutely use a few simple moves to help your intent land just as you want. I didn’t believe that at first either, but it’s true.

Mapping your intent starts with clarity. Name your core message first—what is the thing you want to survive, even if every other detail is compressed? Break big ideas into bite-sized chunks. It’s much easier for both humans and AI to get discrete pieces right. Lean on examples to anchor your meaning. Even a single sharp example can do more work than three fluffy generalities. If tone matters—“make this confident but not salesy,” or “use medical terminology lightly for non-experts”—say that outright, and don’t assume the system will just “get” your context. Real prompting skill isn’t just the right words. It’s seeing the bigger picture—intent, conversation flow, even model quirks, and applying context engineering to shape how meaning lands ibm.com.

That moment where I first saw the system’s layered process? At first, it honestly felt like a wall—a limit I’d never get past. But with just a little digging, that wall became a lever I could move. What surprised me turned out to be my best tool.

One thing I still haven’t totally figured out: sometimes, even when I feel like I’ve nailed the intent and context, the output drifts back to sounding… just a bit too clean. As I keep experimenting, I realize that the ongoing challenge to make AI more transparent is about continuously tweaking prompts, observing patterns, and learning how my instructions affect the system’s output. Maybe it’s the model. Maybe it’s me. I keep poking at it, and haven’t cracked the code yet—but the act of mapping and encoding makes it easier each time. I guess some friction is just baked in.

So here’s what’s next. When you start applying these steps and see how encoding works, you stop being a mere user. You’re actually designing the outcome. Even in those busy, five-minute bursts, you’re shaping meaning like a strategic architect.

See how your intent translates into real content—try Captain AI for free and get an article that actually captures your expertise, message, and voice without the usual generic output.

Enjoyed this post? For more insights on engineering leadership, mindful productivity, and navigating the modern workday, follow me on LinkedIn to stay inspired and join the conversation.