Defining New Tech Roles: How to Spot What’s Missing

Defining New Tech Roles: How to Spot What’s Missing

The Role Confusion No One Warns You About in Defining New Tech Roles

I remember the exact moment I tried to define what an “AI Engineer” actually does—and hit a wall. Titles felt squishy, especially once you tried to pin them down to specific outcomes. Five minutes in, I was questioning whether I even understood my own job.

The instinct, every time, is to play it safe and reach for what we already know. Call it a Data Scientist, an Architect, maybe a Software Engineer if we’re feeling vague. The problem is, we know that leaves all the real questions hanging—what’s this person actually responsible for, and where does their job stop?

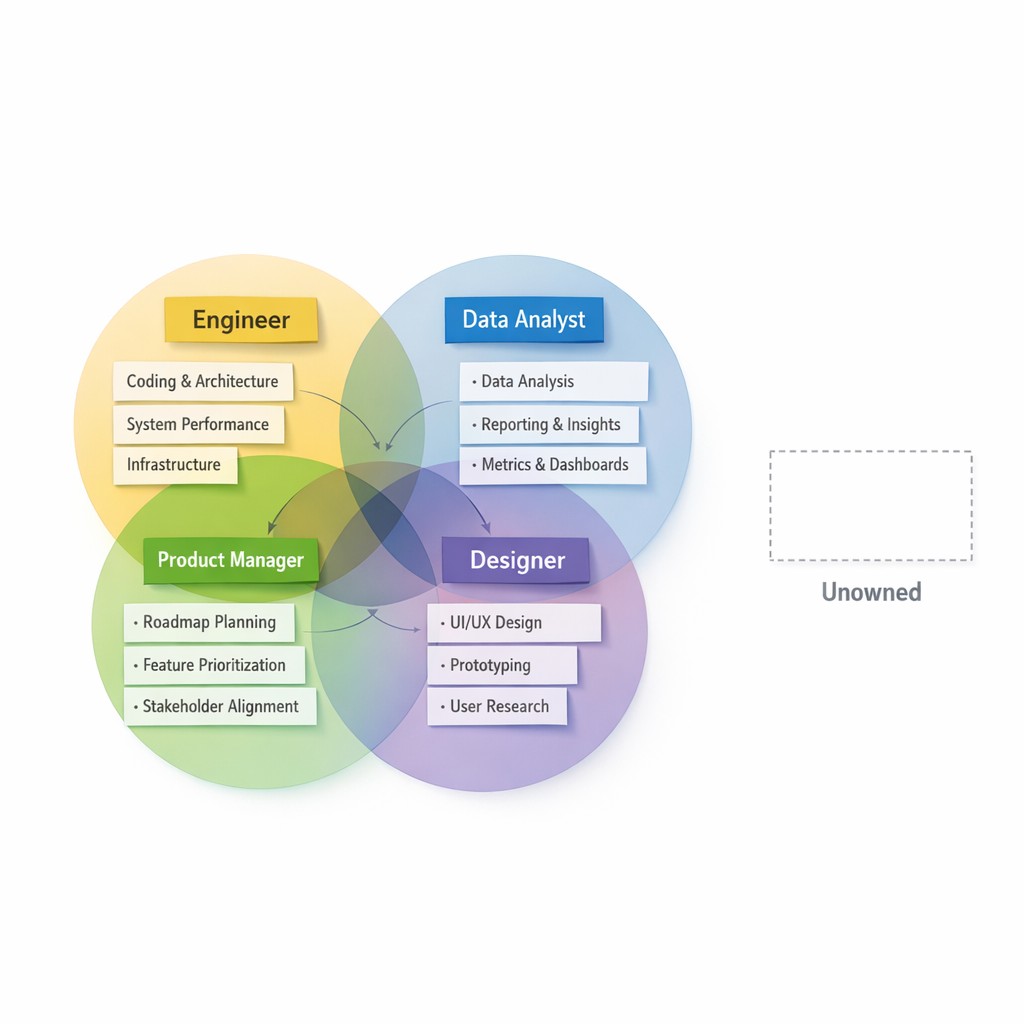

In the process of defining new tech roles, I found myself mapping every title I’d worked under (and plenty I’d worked with). Data Scientists chased signals in the data. Architects made the big technology bets before anyone wrote code—they’re building the house AI moves into. Software Engineers, meanwhile, made sure everything was glued together and stayed running. But none of those jobs—not a single one—was really about the actual, practical work of shaping how an AI system behaves, not just if it works on paper or compiles in production.

It finally clicked. None of those roles cover who makes the AI actually behave—what tools it can use, how it responds, whether it’s working. Someone has to decide those messy middle questions, but the old role templates don’t reach that far.

Pause for a second. If you look at your team structure right now, do you know who’s responsible for making your AI behave the way users expect? Clarifying job responsibilities becomes crucial here; when I noticed the gap, it was a little unsettling—at least until it got a lot clearer where to start.

Mapping Responsibilities to Reveal the Gaps

The best starting point is putting pen to paper. Literally listing out every existing role on the team, then jotting down what each person actually does day to day. It’s simple, but the clarity sneaks up on you. I kept it loose at first, names and then bullets for what “ownership” really looked like this week.

Take the Data Scientist or ML Engineer. These are your model people, and they’re all about extract, learn, predict—drilling into the data, running experiments, tuning parameters. If you need a model built, they’re on it. But here’s the thing. The model doesn’t improve itself. There’s usually a chasm between what they optimize in the lab and what actually happens once the system is live, messy, and exposed to weird things users do.

Architects are different. Their work is mostly upstream. They set the guardrails, pick stacks, and chart platform direction. Kind of like making the big technology bets before anyone writes code. You talk to them before anything tangible exists, then hope the choices still fit when reality hits.

Software Engineers? Their hands are on the keyboard once the platform vision is set. They bring it to life. But shaping the specific day-to-day behavior of the AI, or making sure its interactions adjust in real time? That’s not in their swim lane.

So what’s missing? When you actually do the mapping, the gap is obvious—it’s that operational layer no one claims. Prompt pipelines, model orchestration, context management. The connective tissue that makes the AI responsive, safe, practical. And when you dig into role mapping, you spot at least four types of responsibility gaps—culpability, moral accountability, public accountability, and hands-on ownership layer gaps—each requiring its own approach Springer. In short, mapping role gaps is essential to understanding where accountability breaks down.

Off on a bit of a tangent here, but I’m remembering the time I tried to brute-force this gap out of existence. Maybe two years ago, after a badly handled production incident, I just started stacking meeting invites on people’s calendars—literally color-coded them, made an epic spreadsheet. I figured, surely if we talked enough someone would step up and own the mess. But all we got was a couple weeks of polite nodding and follow-ups that led nowhere. Eventually someone joked that meetings were our “AI Operator.” That stung because it was true, and nothing changed until we actually gave someone the job for real.

I’ll admit, early on I tried patching this just by scheduling more meetings. “Let’s align” seemed easier than inventing a new role. But all that did was make everyone fuzzy on who actually owned what. Turns out, talking about ownership isn’t the same as actually assigning it.

Why New Titles Are About Accountability, Not Jargon

If you’re mapping responsibilities, forget the titles for a second. Start with what’s genuinely missing on your team—what’s left undone, not what you called the last hire.

Here’s what finally crystalized things for me. I kept noticing that someone has to make the AI actually behave. That’s the job. Not a “vibe”—actual, measurable, day-to-day behavior. And I remember the moment it clicked—early 2025, staring at a bug report that didn’t fit anyone’s swim lane, realizing we didn’t have someone responsible for outcomes we could hold up and measure.

So, what does an ‘AI Engineer’ actually do? Think prompt pipelines—setting up clear interfaces so prompting isn’t a giant hack. Model orchestration, knowing which model to route a request to and when. Context management, so your AI remembers the right things and forgets the rest. They build test datasets and scoring rules that hold systems accountable when outputs start to slip. They own regression checks, production monitoring (real monitoring, not “it didn’t crash”), and all the glue that brings humans into the loop, especially when the AI is likely to fail in edge cases.

The more I wrote out these specific behaviors, the more obvious the gap became. It’s not just “making the model work”—it’s making the system behave in ways you can trust, day after day.

Could you patch these responsibilities onto legacy roles? Maybe, but only if you’re okay with confusion or crucial stuff slipping through the cracks. Data scientists call it “done” when the model is trained. Architects close the loop on design reviews. Software engineers keep the infrastructure healthy. This gap asks for accountability that doesn’t naturally fit in their priorities. And you’ll pay for it, eventually, in reliability or speed.

If you’re skeptical whether this exercise is worth it, I get it—half of me thought I was inventing busywork. But when the system broke, it was always the “gaps” that hurt the most. If you care about results, not just org charts, closing those gaps is what actually moves the needle.

How to Systematically Close the Gaps

Here’s a process that works because it forces clarity. First, list every current role and what each person really owns (not what their title says). Next, ask bluntly—what’s slipping through? What practical outcome is nobody’s job? Once you spot it, get specific. Write down exactly what you want this missing role to deliver, not the tasks but the results.

This exercise isn’t just a box-check. Back when I finally committed stuff to paper, the mess shifted from vague anxiety to a map I could actually work with. Suddenly, accountability wasn’t some abstract “culture problem.” It was a post-it on someone’s desk.

Want a cheat sheet? If you’re not sure whether there’s a gap, try this. Scan your incident history from the last six months and notice which problems never get assigned to a person but keep returning. Or, look for anything repeatedly “monitored” by committee. Ask: Who investigates unexpected outputs? Who’s in charge of ensuring feedback loops between users and the system aren’t broken? Who actually measures whether system updates help or hurt? If you can’t put a single name or role on it, you’ve found your gap.

I’ve seen what happens when you skip this. Responsibility mapping gets ignored, and missing incident reports, unchecked organizational errors, and unaddressed failures by legal risk teams become the norm. It’s not dramatic. AIs just quietly get worse, problems slip by, and the accountability debt mounts up.

None of this is unique to AI. Understanding emerging roles is key, because any new piece of technology brings the same ambiguity, and the same need to make responsibility visible, specific, and tractable from day one.

Building Teams That Actually Change Things

Defining roles isn’t just HR paperwork. It’s a core lever for business strategy. The difference comes when you focus on outcomes, not just labels. Teams get real leverage when subject matter experts join agile pods, giving direct on-the-ground feedback and reducing wasted downstream effort.

Maybe you’re still skeptical. Honestly, bridging skills in tech always felt like a “nice to have” until I saw how much faster (and more accountable) a team moves once the fog lifts. That shift in my own thinking—seeing ambiguity as the actual blocker—made all the difference.

Here’s the summary, in plain terms. Start by mapping what roles really do. Spot the gaps. Write them down. Invite your team to do the same—just seeing who owns what (and what’s left hanging) is how you actually start closing the cracks.

If you want to spend less time mapping content gaps and more time sharing your expertise, try Captain AI to generate a tailored article for you at no cost.

It took some thinking, but here’s how it turned out. Years back, everything about AI roles felt ambiguous; now, mapping the gaps is simply how I make systems work for real, not just in theory.

I’ll be honest—there are still mornings when the boundaries get blurry, especially as everything shifts under your feet. I haven’t found a perfect formula that covers every org or every new technology. Maybe I never will. Mapping the gaps just gets the big rocks out of the way. The rest, I’m still working on.

Enjoyed this post? For more insights on engineering leadership, mindful productivity, and navigating the modern workday, follow me on LinkedIn to stay inspired and join the conversation.