Choosing Simple vs Complex Tools: Proven Methods for Consistent Results

Choosing Simple vs Complex Tools: Proven Methods for Consistent Results

Why We Keep Reaching for AI—And Why It’s Costing Us

If you’ve ever spent a morning wrestling with an AI tool to do something simple, you’re not alone. I find myself burning hours and energy on tasks that—looking back—could have been handled by something much less glamorous. It’s almost a reflex at this point, and I know I’m not the only one.

Here’s the moment it clicked for me. When I needed to assign 100 keywords across 10 topics based on best fit, I did what felt natural—asked the LLM to figure it out. At the time, using a big, general model felt like the obvious, modern answer.

But then I got stuck. I tried rewording prompts, shuffling the keyword order, even piped in extra examples hoping it would help. No matter how much I tweaked, the output plateaued—never quite accurate, always a bit off.

I consider myself an AI power user. But that’s exactly my blind spot. Choosing simple vs complex tools is a key decision, and when you’re steeped in advanced tools, it’s easy to overcomplicate a problem and lose sight of the simpler way through.

Here’s the shift. Not every content or classification job needs to be handled by AI. A lot of stalled progress is totally avoidable. If you’ve been spinning your wheels, you’re probably closer to an efficient solution than you think.

When “Asking AI” Fails: LLMs and the Limits of Intuition

The first warning sign was hard to ignore. The distribution came out biased. I’d ask the model to sort items by fit, only to get lopsided answers—sometimes favoring one category, sometimes spreading things out too evenly. There was no consistency. Some days it was off by a little, others by a lot.

Naturally, I doubled down on fixing it. Changed the prompt, tried more system messages, threw in extra data snippets, all hoping for that sweet spot. Kept prompt engineering around a problem, repeatedly seeking a solution via LLMs despite a simpler option. Nothing stuck; the weirdness just shifted shades.

Then it hit me. I was asking AI to intuit something that’s actually just math. All those tweaks and second-guessing? Completely sidestepping a straightforward, deterministic solution that never would have gotten tripped up in the first place.

The real strength of LLMs is reasoning in context, generating text, filling in conversational gaps, or dealing with ambiguity—especially anywhere language or logic is flexible. But the flip side is just as important. When you need consistent, repeatable, and fair distribution, especially across large sets, these models stumble. To avoid AI overengineering, remember that their “intuition” isn’t the same as arithmetic, and the way they mimic patterns can amplify subtle biases or inject randomness where none should exist.

If this has happened to you, you’re not missing something clever. Sometimes the problem really is better solved by simple math, not more clever prompts. Trust your healthy skepticism—it’ll save you the detour.

Embeddings: The Overlooked Power Move

We’re so focused on LLMs that we miss the other tools quietly becoming more powerful alongside them. Embeddings have been in the background for years—old news, in tech terms—but somehow, many of us ignored how far they’ve come. I was busy prompting my way through problems that embeddings had quietly grown ready to solve.

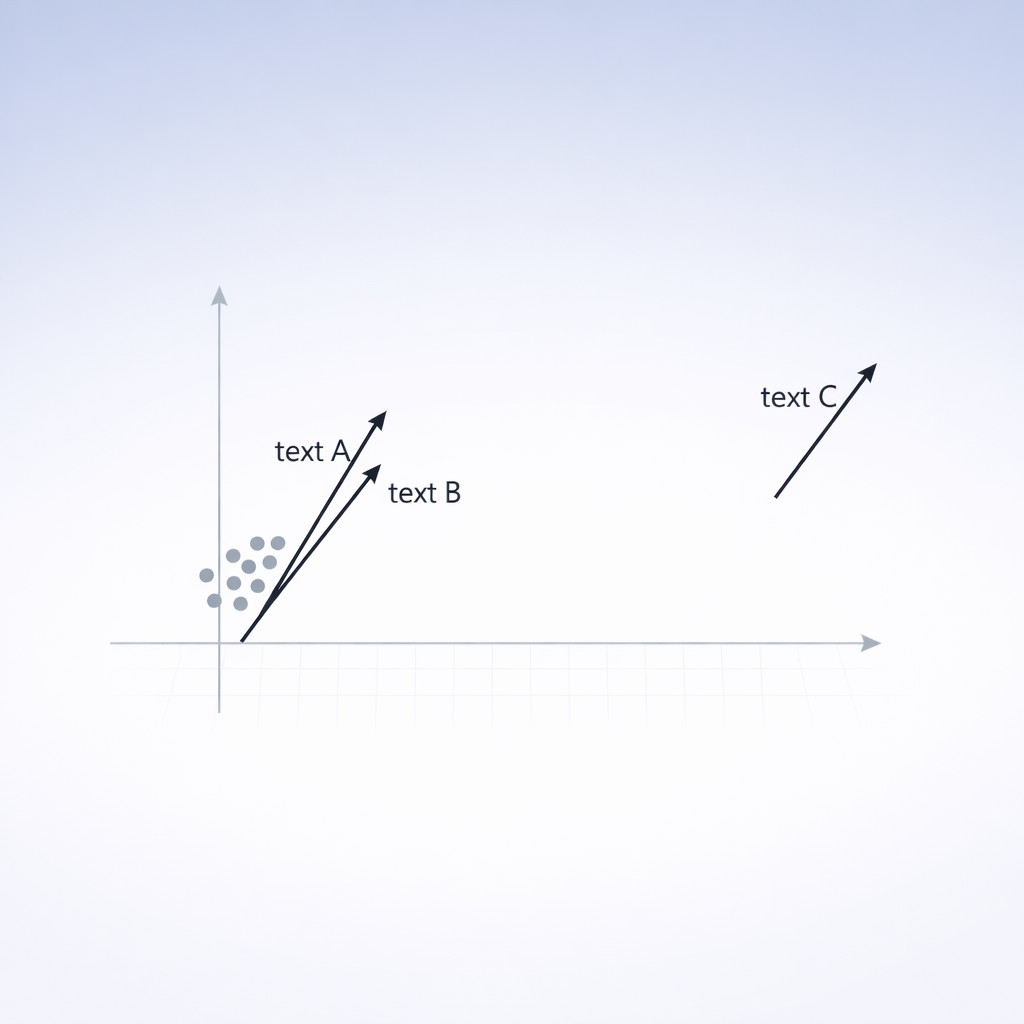

So, what are embeddings, really? Picture this. Every piece of data—a word, a sentence, even an image—gets packed into a string of numbers, a vector, in a multidimensional space. Then the magic is math. To check if two things are similar, you measure the angle between their vectors—cosine similarity, if you want the math term. That number tells you, with precision, how close two ideas, sentences, or even products really are. Word embeddings pack every word into a vector—context and similarity are measured using the angle between those vectors, called cosine similarity. It’s simple, consistent, and there’s no “gut feeling” needed from the software.

Why does this matter? Embeddings don’t have ordering bias. They don’t get lazy at the end of a list. They don’t hallucinate a “best fit.” They calculate distance. That’s it. Renormalization techniques can significantly improve embedding performance for retrieval and classification, providing lightweight, model-agnostic fixes that enhance reliability and efficiency.

You might still be thinking embeddings sound like something only researchers mess with. Turns out they are. OpenAI’s API, vector databases, open source models—this isn’t PhD territory anymore. If you can wrangle a spreadsheet, you can play with embeddings.

And they’re cheap. And fast. And they’ve been around for over a decade. When something delivers the same or better results as AI—with less cost, friction, and headache—it’s worth getting comfortable with it.

Odd thing I noticed, actually—I was up late one night, comparing outputs from an LLM versus embeddings on my old laptop. I got so caught up in debugging a Python script that I missed my dog’s dinner time. He sat by the door with this “are you seriously going to make me wait for cosine similarity?” look. Anyway, hindsight: the embeddings finished the job before I even noticed, while the LLM kept circling around my prompt tweaks. Sometimes, reminders to keep it simple don’t come from dashboards or builds—they show up as annoyed pets.

Choosing Simple vs Complex Tools: Simpler Tools, Better Results, and Efficiency Over Elegance

Let’s face it. Choosing a “simple” method feels a bit risky. You might worry you’ll lose nuance, or end up cornered when the task gets weird. But there’s no shame in reaching for what actually works. The point isn’t to do less, just to do smarter.

Truth is, flexibility isn’t measured by tool complexity. It’s about fitting the right process to the problem. When you call on straightforward, deterministic tools, you unlock deterministic solutions benefits, getting results faster and with higher confidence. The showy AI stuff only matters if it helps, not just because it’s new.

And honestly, I get pulled in by shiny tech all the time. It’s a familiar trap. You see an exciting new gadget, a flashy software update, and suddenly you’re convinced your workflow isn’t just lacking—it’s outdated. I’ve upgraded phones only to spend weeks figuring out a new interface, all for basically the same calls and texts. There’s something about novelty that whispers “progress,” even if your old tool would’ve handled things easier.

The appeal of advanced AI is no different. Feels futuristic, but sometimes all it does is complicate things you solved years ago. I’ve spent days trying to automate something with the latest model, only to realize I could do it with a spreadsheet in ten minutes. We chase innovation… and wind up tangled in unnecessary complexity.

That’s why looping back to the keyword assignment problem mattered. When I swapped intuition-driven prompting for embeddings, accuracy went up and my process sped up. Expertise stayed intact, but the work got way simpler.

If you’re running a content operation, or managing business workflows, ask yourself: is the task about picking, matching, or sorting based on clear criteria? When deciding between simple vs advanced methods, lean towards mathematical approaches—embeddings, vector search, classic ranking algorithms. Save AI reasoning for cases where rules break down, meaning is foggy, or you need deep analysis. The moment you start “guessing,” reach for something intuitive. When you want reproducible answers, trust the math. Efficiency isn’t about skipping steps—it’s about getting answers you can depend on, fast.

Choose Tools That Fit: Efficiency is the New Expertise

Six months ago, I would have doubled down on prompt engineering without a second thought. Looking back, it’s wild how much time I spent trying to outsmart problems with heavy-duty AI, only to find a smoother path using straightforward embeddings. That shift—away from convoluted intuition and toward simple math—changed everything for me.

You’ll feel the relief right away. With reliable, fast solutions, the guesswork and frustration drop away. You keep your expertise (and sanity) intact, and the process just works. I wish I’d figured this out sooner.

If you’re ready to spend less time wrestling with tools and more time sharing your expertise, try generating your next article for free with Captain AI and see how simple results can be.

Pushing for efficiency isn’t cutting corners. It’s how you put your experience to work alongside the right technology. Honestly, it’s more satisfying, because you know exactly why the result is good—and you can trust it, every time.

So next time you scope a project, pause for a second. Before you choose, consult an AI tool selection guide: is there a simpler, more direct tool you’re overlooking? Give it a shot. You might be surprised how much “AI power user” really means “uses the best tool, not just the shiniest.”

And I should admit—even now, sometimes I still reach for LLMs out of habit. Maybe that’s one itch I’ll always have to wrestle with.

Enjoyed this post? For more insights on engineering leadership, mindful productivity, and navigating the modern workday, follow me on LinkedIn to stay inspired and join the conversation.