How AI Understands Meaning: Unlocking Context for Relevant Results

How AI Understands Meaning: Unlocking Context for Relevant Results

When “Smart” AI Sounds Anything But

If you’ve ever asked an AI tool for help—maybe to find insights in a heap of customer feedback, or to generate a bit of branded language—you probably know the feeling: bland, surface-level stuff, or answers that make you wonder if it even read your request. I can’t tell you how often I’ve stared at the screen, wondering if the thing just doesn’t “get” me, or if I’m missing something obvious.

For a long time, I assumed this was just about keywords. Not enough input? Add more context to the prompt. Too generic? Reword what I wanted with different phrases. I spent weeks tweaking instructions, trying to reverse-engineer what would get me something actually useful.

But here’s the real snag. These issues aren’t just AI being “not smart enough,” or some flaw in the system. They highlight a bigger gap in how ai understands meaning and how machines process language. If we’re stuck in the loop of changing words and expecting better results, we’re missing the heart of it.

That old advice—just stuff in more or different keywords—doesn’t solve the core problem. You and I don’t want more output. We want the right output. There’s a bigger shift waiting, and it doesn’t come from fiddling with search terms.

And honestly? The term “embeddings” made no sense to me. It sounded like “webbing”—webs of words. I couldn’t internalize it.

Tokens, Embeddings, and How AI Understands Meaning: The Strange Math Behind It

Here’s where I got stuck. Tokens, those I could grasp—just pieces of words or phrases, neat little building blocks AI chews through one by one. But embeddings? The leap from counting up tokens to somehow mapping meaning as “distance” between words left me sideways. I wasn’t the only one. Most leaders I meet nod until this part, then their faces go a bit blank. It felt like trying to picture “smell” as a shape—something didn’t compute.

Six months ago, I honestly thought embeddings were just a fancy word for “word list with numbers.” Turns out, it’s closer to plotting words on an invisible, logic-powered map.

The first real crack in my confusion came when someone showed me you could actually do math with meaning. Not just “compare” words, but literally add and subtract them, and the AI would somehow know what you meant. That was new. Maybe you’ve seen this before and shrugged, but for me, that was the moment things tilted. Meaning didn’t have to be an uncrackable black box—it could be measured, shifted, almost… mapped, if that’s not too weird a word.

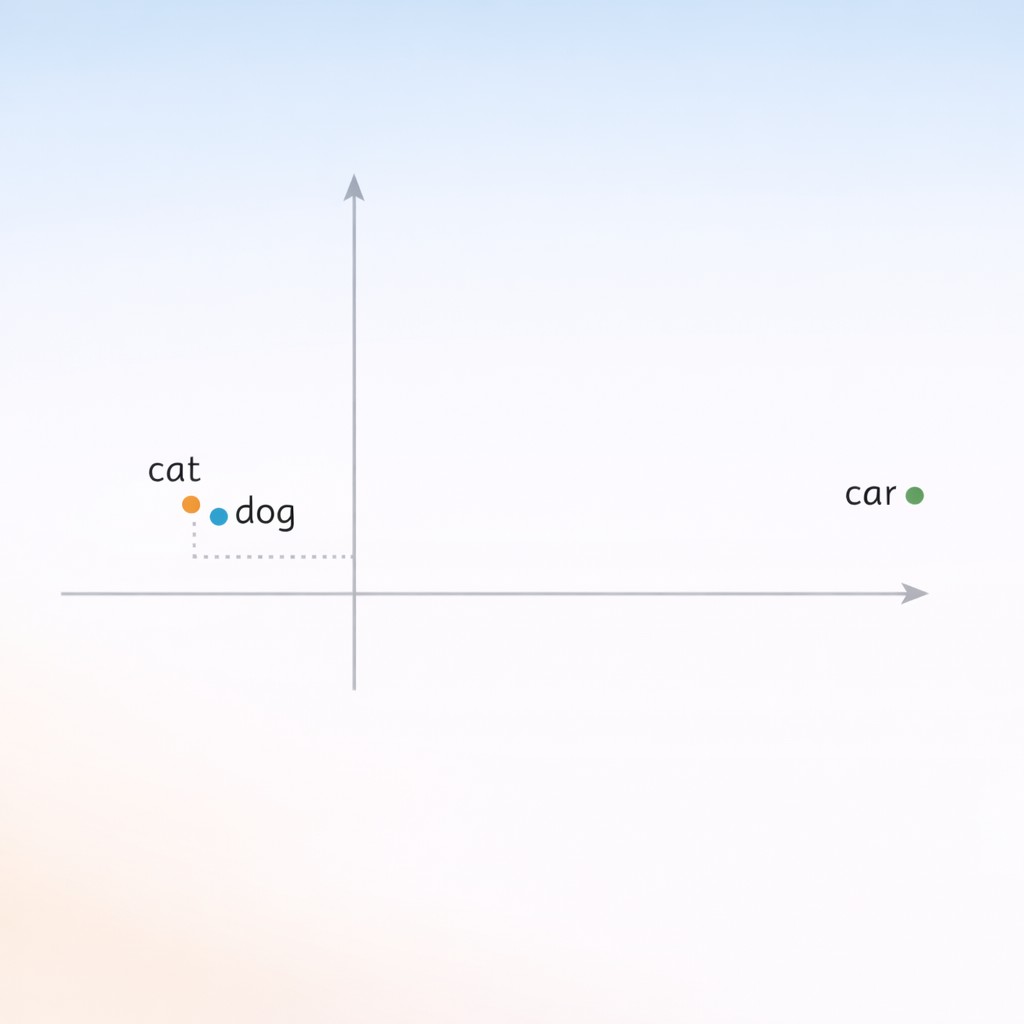

Take the classic “cat,” “dog,” and “car” example. In the AI’s world, “cat” and “dog” show up in nearly the same context: “feed the ___”, “walk the ___”, “pet the ___.” They’re close neighbors because their jobs—in sentences, in the logic of what people do—are similar. “Car”? “Drive the car,” “park the car,” “repair the car.” Its context is so different that its “location” is somewhere else entirely. Embedding vectors explained: It’s that shift from thinking of words as flat, static things, to understanding them as connected in a landscape shaped by context.

That’s what an embedding is. It’s a set of numbers—not random, but carefully chosen—that encode which words show up together, which swap into each other, which never cross paths. Each word gets a kind of longitude and latitude (well, with way more dimensions than a map), and those coordinates mean that relationships can be measured, not just looked up.

King – man + woman = queen shouldn’t work. But it does.

I spent a good twenty minutes trying to “break” this in my head—testing whether it was a party trick or something real. When you jumble the math—king minus man plus woman—you land at queen, which actually encodes a gender relationship in the vectors. And that was the first time I realized: this method isn’t just possible, it’s genuinely more powerful than keyword-tweaking. A whole world opens up. I didn’t get all the math right away, and I won’t pretend I could prove why it works—but seeing it in action, feeling that snap of “oh, so that’s how meaning becomes math”—that was the relief I’d been looking for.

Sometimes, trying to think in all those dimensions, my brain just takes an odd left turn. For reasons I can’t really explain, the last time I tried to imagine this “landscape of context,” I ended up remembering a time I got myself lost in a hedge maze—literally lost, not metaphorically. Circling the same dead ends, convinced I’d recognize a shortcut if I saw it. That’s how learning embeddings has sometimes been for me: you double back, hit the same puzzles, then suddenly see the opening that was right there the whole time.

If you find yourself skeptical, that’s natural. This shift isn’t about learning one more tool or trick. It’s about knowing that, under the hood, AI is working with context, not just words—and that opens up everything.

Why Vector-Based Retrieval Crushes Keywords

Here’s where the usual keyword search just falls over. Drop a phrase like “boost team motivation” into a keyword-driven engine, and you’ll mostly get articles with those three words squished together—doesn’t matter if the piece is actually about basketball or summer camp. It’s all surface similarity, with no sense of what you actually care about. Here’s what’s changing: Semantic search in AI is leaving keyword ranking behind for a Transformer-based engine that digs into meaning instead of just word matches. The leap is that semantic retrieval tries to get under the skin of your question—not just the letters, but the intent and context lurking underneath.

Let’s ground that in a real, practical moment. Imagine you’re searching for ways to encourage your sales team after a rough quarter. If you use a system relying on embeddings and vector search, you can type in “help team recalibrate after setbacks,” and it actually finds that post you wrote last year about recovering from a missed target—even though you never once used the word “recalibrate.” It’s like the system finally gets what you mean, not just what you say.

This—right here—is how AI gets meaning. It’s not memorizing dictionary definitions or rigid templates. Instead, it’s learning AI semantic relationships in language and context, and it encodes all that nuance as positions in this weird mathematical space. It pulls ideas together by how close they really are. This is how AI ‘understands’ meaning—not through definitions, but through relationships captured in vector space.

Now, if you’ve reached this point and are thinking, “Isn’t this still just a lot of technical magic? My business can’t spare time for theory when we just need results”—that’s a fair concern. I won’t sugarcoat it, the first time I saw vector diagrams I thought, “This is a data scientist’s playground, not mine.”

But here’s the reality check. Because the stack of info out there is massive, semantic search now goes past matching keywords—using NLP and machine learning to interpret contextual meaning in ai so search feels more human. In plain speak: businesses using this approach don’t just get “okay” output, they unlock content and answers that finally fit the way they actually talk and think. The impact is dead practical—smarter content, faster workflows, and a real chance to stand out in markets drowning in sameness.

It reminds me of that math leap from before—switching from “count the words” to “measure the meaning.” It’s not just a clever upgrade, it’s the difference between being heard and getting lost. And once you see it in action, it’s hard to go back.

Making Vector Meaning Work for Your Business

If you’ve made it this far, let’s put a stake in the ground. Understanding embeddings isn’t just an academic exercise. It’s a lever—a way to find, generate, and reuse content that actually lines up with your voice, not just the latest buzzwords. I’ll admit, for a long time, this felt like extra work that might not pay off. But once the basics clicked, the payoff was surprisingly immediate.

Here’s the part that’s practical. With AI tools tuned to use vector search, it suddenly becomes possible to surface real examples, old case studies, or specific turns of phrase that actually reflect how you speak or write. Not just things that hit the right keywords. The difference is subtle, but powerful. Instead of getting back some “average” marketing catchphrase, you can re-discover that quirky opening line from a blog post you forgot you’d written or have the AI remix your sales stories with your actual language in the mix.

This is the “why” behind the whole pivot. Embeddings are why semantic depth beats keyword density. Machines now read for meaning and relationships, not repetition. In other words, how AI interprets language is shifting—the focus is now on context and concept, not just word count. That’s the shift.

If you’re wondering how to start, here’s what I’d recommend. First, just do a quick review of the tools you’re already using—many platforms now have options for “semantic” or “vector-based” search features. Turn them on and see what changes. Second, if you or your team are looking to upgrade, explicitly ask vendors if their systems use embeddings (there’s no harm in making them clarify). And third, try a “meaning math” prompt: ask your AI to find content similar to your own, or play with the “king – man + woman = queen” analogy on words you care about. Often just testing what comes out is enough to spark those “aha” moments.

If you want content that captures your voice and actual meaning, try Captain AI to generate an article for free and see how it handles your unique perspective.

All that said, if any part of this still feels a little intimidating, that’s normal. Mastering vector meaning isn’t about memorizing equations; it’s about making sure the technology serves your point of view. You don’t need to be a mathematician to unlock relevance—you just need to aim for substance over surface. If you stick with it, clarity shows up faster than you’d expect.

But even now, there’s a part of me that still doesn’t quite trust that all the nuance of human meaning can get crammed into multidimensional vectors. Maybe it’s just habit, but I keep reaching for words the old way sometimes—one keyword at a time—and wondering if there’s still something I’m missing. Maybe that’s okay. I’m still figuring out where the map ends and the maze begins.

Enjoyed this post? For more insights on engineering leadership, mindful productivity, and navigating the modern workday, follow me on LinkedIn to stay inspired and join the conversation.